Implementing a many-to-many relationship in a relational database is not as straight forward as a one-to-many relationship because there is no single command to accomplish it. The same holds true for implementing them in mongoDB. As a matter of fact you cannot implement any type of relationship in mongoDB via a command. However, having the ability to store arrays in a document does allow you to store the data in a way that is fast to retrieve and easy to maintain and provides you the information to relate two documents in your code.

Modeling in relational database

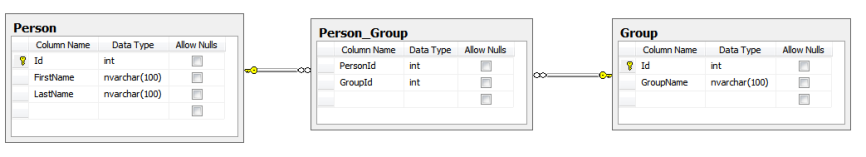

In the past, I have modeled many-to-many relationships in relational databases using a junction table. A junction table is just the table that stores the keys from the two parent tables to form the relationship. See the example below, where there is a many-to-may relationship between the person table and the group table. The person_group table is the junction table.

Modeling in mongoDB

Using the schema-less nature of mongoDB and arrays, you can accomplish the same data model and create short query times with the appropriate indexes. Basically, you can store an array of the ObjectId’s from the group collection in person collection to identify what groups a person belongs to. Likewise, you can store an array of the ObjectId’s from the person collection in the group document to identify what persons belong to a group. You can also store DBRef’s in the array, but that would only be necessary if your collection names will change over time.

This is how some person documents would look in mongoDB.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | |

This is how some group documents would look in mongoDB.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 | |

The documents above show that “Joe Mongo” belongs to the “mongoDB User” and “mongoDB Administrator” groups. Similarly, “Sally Mongo” only belongs to the “mongoDB User” group. Effectively, these two arrays make up the data that is stored in the person_group table from the relational database example.

If you choose, you can just create the appropriate array on either the person documents or the group documents, but that would make your queries somewhat more complicated.

Getting the data

The following queries will show you how you can query the data without having to use joins as you would in a relational database.

1 2 3 4 5 6 7 8 9 10 11 | |

In order to improve the performance of the queries above, you should create indexes on the person.groups field and the groups.persons field. This can be accomplished by using the following commands.

1 2 3 | |

Summary

In general it’s pretty straight forward to be able to implement a many-to-many relationship in mongoDB. I have shown one method of doing this that closely resembles how it’s done in a relational database. One thing to keep in mind when it comes to data modeling, there is no silver bullet and you should always create the most appropriate data model that meets the needs of how you data will be queried.

]]>